Innovative Teaching Methods Workshop

A hands-on workshop exploring cutting-edge teaching strategies that engage modern learners and promote deeper understanding. Perfect for K-12 educators looking to refresh their teaching approach.

The same principles and ease of use you expect from Vercel, now for your agentic applications.

For over a decade, Vercel has helped teams develop, preview, and ship everything from static sites to full-stack apps. That mission shaped the Frontend Cloud, now relied on by millions of developers and powering some of the largest sites and apps in the world.

Now, AI is changing what and how we build. Interfaces are becoming conversations and workflows are becoming autonomous.

We've seen this firsthand while building v0 and working with AI teams like Browserbase and Decagon. The pattern is clear: developers need expanded tools, new infrastructure primitives, and even more protections for their intelligent, agent-powered applications.

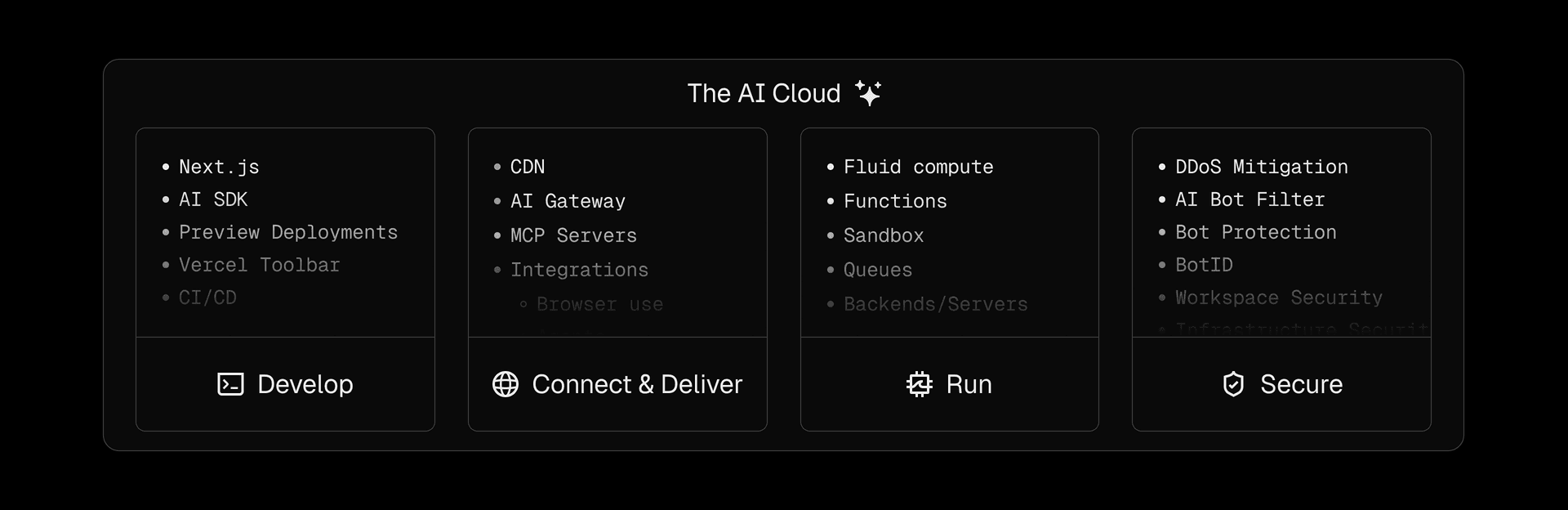

At Vercel Ship, we introduced the AI Cloud: a unified platform that lets teams build AI features and apps with the right tools to stay flexible, move fast, and be secure, all while focusing on their products, not infrastructure.

The AI Cloud builds on the same foundation as the Frontend Cloud, extending its capabilities to support agentic workloads.

The AI Cloud introduces new AI-first tools and primitives, like:

- AI SDK and AI Gateway to integrate with any model or tool

- Fluid compute with Active CPU pricing for high-concurrency, low-latency, cost-efficient AI execution

- Tool support, MCP servers, and queues, for autonomous actions and background task execution

- Secure sandboxes to run untrusted agent-generated code

These solutions all work together so teams can build and iterate on anything from conversational AI frontends to an army of end-to-end autonomous agents, without infrastructure or additional resource overhead.

Link to headingA unified, self-driving platform

What makes the AI Cloud powerful is the same principle that made the Frontend Cloud successful: infrastructure should emerge from code, not manual configuration. Framework-defined infrastructure turns your application logic into running cloud services, automatically. This is even more important as we see agents and AI shipping more code than ever before.

With the AI Cloud, you (or your agents) can build AI apps without ever touching low-level infrastructure.

Take AI SDK, which makes it easy to work with LLMs by standardizing code across the many providers and lets you swap models without changing code. AI inference is made simple by generalizing many provider-specific processes.

When the AI SDK is deployed in a Vercel application, calls are routed to the appropriate vendor. They can also go through the AI Gateway, a global provider-agnostic layer that manages API keys, provider accounts, and improves availability with retries, fallbacks, and performance optimizations.

app/api/flights/route.ts

import { streamText, StreamingTextResponse, tool } from 'ai';

import { z } from 'zod';

export async function POST(req: Request) {

const { prompt } = await req.json();

const result = await streamText({

model: 'openai/gpt-4o', // This will access the model via AI Gateway

prompt,

tools: {

weather: tool({

description: 'Get the weather in a location',

parameters: z.object({

location: z.string()

}),

execute: async ({ location }) => {

const res = await fetch(

`https://api.weatherapi.com/v1/current.json?q=${location}`

);

const data = await res.json();

return { location, weather: data };

},

}),

},

});

return new StreamingTextResponse(result);

}

A sample AI API endpoint using AI SDK and AI Gateway. Its structure resembles a traditional endpoint with an easy package to accept a prompt from the frontend and stream a response back.

In this example, AI SDK defines the interaction, while AI Gateway handles the execution. Together, they reduce the overhead of building and scaling AI features that can actually reason and derive intent.

These AI calls often run as simple functions that must scale instantly. But unlike typical workloads, LLM interactions frequently involve wait times and long idle periods. This breaks the operational model of traditional serverless, which isn't efficient during inactivity. AI workloads need a compute model that handles both burst and idle with minimal overhead.

Link to headingAI Cloud compute

At the core of the AI Cloud is Fluid compute, which optimizes for these workloads while eliminating traditional serverless and server tradeoffs such as cold starts, manual scaling, overprovisioning, and inefficient concurrency.

Fluid deploys with the serverless model while intelligently reusing existing resources before scaling to create new ones, and with Active CPU pricing, resources are not only reduced, but you only pay compute rates when your code is actively executing.

For workloads with high idle time, such as AI inference, agents, or MCP servers that wait on external responses, this resource efficiency can reduce costs by up to 90% compared to traditional serverless. This efficiency also applies during an AI agent's tool use.